Notes on the 7-hour marathon interview with Saining Xie

Some observations from the first and only podcast interview with Saining Xie.

Saining Xie is an Assistant Professor of Computer Science at NYU and a co-founder and CTO of AMI Labs. He argues that human-like intelligence will require moving beyond language-only systems toward models that learn from continuous signals in the real world.

Please watch the full podcast here, and support its channel Zhang Xiaojun Podcast.

.1. Teaching AI How to Love

Early in the interview, Xie recalls a phone call with Ilya Sutskever. The call was meant to be about whether Xie might join Ilya’s company at the time. Instead, they spent the whole time talking about how to teach AI to love.

There was no action plan at the end, but the anecdote comes to show the kind of question Xie thinks is worth spending time on. He sees the ability to love as basic to the future stability of AI. At the same time, he sees the other side of the coin: if a system can love, hate may follow. That is part of why he’s drawn to world models not as a purely technical goal, but as a path to safer intelligence.

2. On the Nature of Research

Xie said he dislikes the word “influence.” He agrees with his friend Kaiming He (whom he mentioned 84 times during the conversation) that the nature of research is to share knowledge. A paper should help us understand something, which matters much more than performance numbers or academic posturing.

Xie invokes Arendt’s well-known distinction between influence and understanding—in a 1964 interview she called the desire to be influential ‘a masculine question’ and said she preferred to understand. As Arendt put it, the purpose of this pursuit is “understanding”: living beings need to be understood.

That is the version of research Xie wants to do. You arrive at some understanding, you write it down, you put it out into the world. If it resonates, the understanding spreads — and somewhere along the way, you feel understood too. He described that loop as something close to family.

Xie added: influence is self-centred; mutual understanding raises the collective intelligence of the whole planet. And more intelligence benefits everyone.

3. Research Taste

Xie said good research taste is hard to define directly. But he was clear on what bad taste looks like.

Bad taste, in his view, comes from chasing appearances: conference acceptances, praise, hype, quick results, status—all the external signs that make something look successful.

He linked this to a line from the Diamond Sutra: all appearances are illusory; what you see on the surface is often not what matters most.

That idea applies far beyond research—to products, marketing, writing, and life in general. It is easy to do things because they look right or because society rewards them. It is much harder to keep asking what is fundamental.

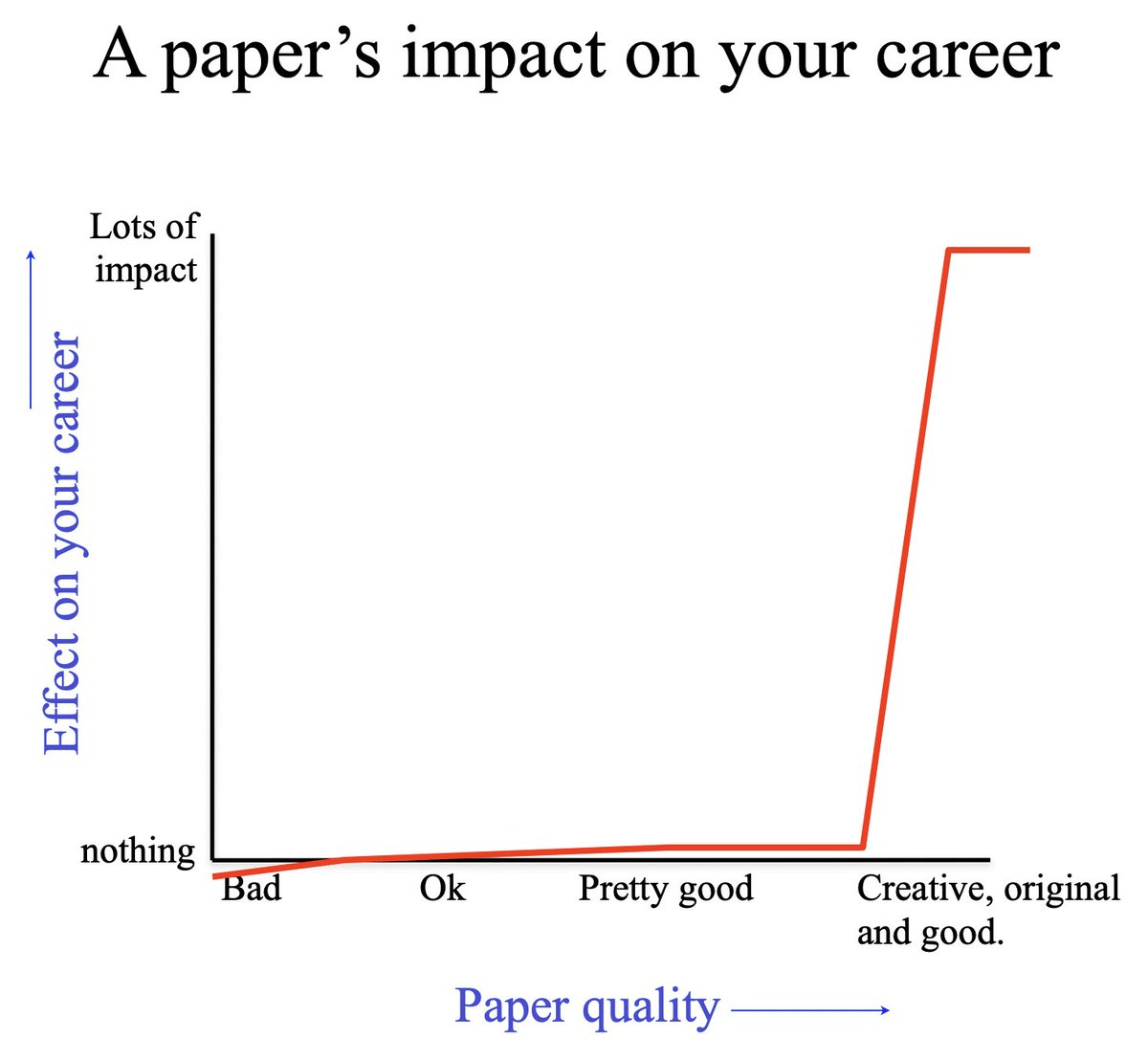

Xie mentioned the non-linear research career by Professor Bill Freeman. Strong researchers are often driven by long-term passion and pure curiosity. They care less about short-term reception and more about whether their work eventually reaches its fullest expression.

4. Doing Research and Filmmaking

Xie mentioned many times that he loves movies. His childhood dream was to become a director, though it quickly faded.

As an adult, he soon realised that doing research is no different from making a film.

A good paper is not just a pile of methods and results. The techniques matter, but what matters just as much is the story behind the paper: what decisions were made, why they were made, what changed, what the researchers saw that others missed, and whether the reader leaves with a new path to explore.

He puts this in personal terms as well. Quoting Martin Scorsese’s idea that the most creative things are the most personal, Xie argues that long-term work needs an inner force behind it. Technique matters. But so does finding “the fire in your heart” and using your own perspective to guide the work.

5. Video as non-Static

On studying world models through video, Xie mentioned his admiration for Chinese directors Jia Zhangke and Bi Gan, both known for long takes.

Xie summarised Jia Zhangke’s idea: what makes cinema interesting is that every frame on the timeline can be expanded through cinematic space – what he called as “blank space (留白)”.Although we see a sequence of frames, what lies behind it is the state of the world and global information across space.

At the same time, this raises a harder question. Different people could infer different things from the same scene. What exactly should a universal world model reconstruct? Is there one shared world behind perception, or is part of the difficulty that interpretation is never fully identical?

Xie did not offer a final answer. But that may be part of the point. World models are difficult not only because they must recognise what is visible, but also because they must learn the hidden structure behind what we see.

6. What Problem Does Computer Vision Solve?

This is where video becomes interesting. A still image gives us a scene, but video gives us continuity, motion, and clues about cause and effect. Xie draws on cinema to argue that each frame points beyond itself to a larger world behind the image. This matters because it shows what he thinks current AI lacks. Humans process the world via inferring space, objects, continuity, and hidden structure across time. In a sense, seeing is a form of modelling. A system that only labels pixels is doing something far narrower than perception as humans experience it.

In Cambrian-S, Xie and his co-authors argue that multimodal AI must move beyond simple image description toward what they call “spatial supersensing.” The key idea is that real progress will require models that go beyond labelling what they see and build internal structure from continuous experience.

Xie uses an analogy from autonomous driving levels to explain the path from language-only models to systems that can perceive continuous visual streams, infer spatial structure, and eventually predict the world. The exact labels in the interview are informal, but the underlying argument is consistent with Cambrian-S:

L0: Pure LLM. It cannot see images or video, knows the world only through language, like Plato’s cave.

L1: Current multimodal systems. Show them an image, and they can answer questions about it.

L2: Systems that can handle continuous visual streams, not just still images, and understand what is happening.

L3: Spatial cognition. At every point in time, the system can infer the real 3D space behind the pixels.

L4: A predictive world model—an agent that truly lives in the real world.

In Xie’s view, computer vision tackles a basic problem that intelligence must solve: to build lasting internal models of the world, use them to organise incoming information, and predict what happens next.

7. Representation Learning Sits Underneath Everything Else

A related theme is representation learning. Xie keeps returning to how a system learns the right internal representations in the first place. On his personal site, he describes his work as pushing the boundaries of multimodal intelligence and “spatial supersensing” across images and videos. The emphasis is on both outputs and the underlying structure that enables a model to perceive and reason well.

This is also why he worries that language can become a crutch. If vision only serves language, the model may never learn the deeper abstractions that perception should provide on its own. In his view, good intelligence may depend less on piling up more text and more on learning stronger internal representations from the world itself.

8. LLMs Are Anti–Bitter Lesson

The Bitter Lesson (Rich Sutton) says: AI history keeps showing that using lots of computing power with general-purpose methods always beats human-designed “clever” solutions in the long run. In other words, don’t overvalue human prior knowledge; let machines learn for themselves.

Many see LLMs as a perfect example of the Bitter Lesson. But Xie argues the opposite. He thinks LLMs are anti–Bitter Lesson.

Language itself is one of humanity’s most refined designs over thousands of years. It has syntax, structure, logic. From day one, LLMs have remained within human prior frameworks. As Xie put it: “Language is an extremely refined human structure.” Leaning too heavily on language risks distorting the core of vision research.

In Xie’s view, it might be abandoning language’s structure and learning something more basic—pixels, or even other than pixels (which we will dive in deeper in the later part).

This points to a deeper question: what really counts as intelligence? If a system only ever works within rules humans laid down for it, in what sense is it thinking? Real intelligence, the argument goes, would be a system that breaks past our categories and works out the basic logic of the world on its own.

9. Robotics as a Problem of Intelligence

The same logic appears in the interview’s discussion of robotics. Xie’s view is that robot hardware has advanced way faster than intelligence. The dancing robots we all saw on the Chinese New Year gala were surely spectacular, but in reality, according to Xie’s private conversation with the research team behind these robots, they were worried about the missing piece — the “brains”.

That fits the broader public direction of AMI Labs, which aims to build AI systems that understand the real world and apply them in robotics, automation, healthcare, and wearable devices. The emphasis is on systems that are reliable and controllable in messy real environments, not only impressive in demos.

10. Goal, Not a Route

Xie’s definition of a world model is simple: given the current state of a system and an action, the AI predicts what comes next. That prediction is what guides the decision.

The idea isn’t new. Psychologist Kenneth Craik proposed it in 1943. Engineers used it to fly moon probes in the 1960s and 70s. Reinforcement learning uses it too.

Xie’s point is that a world model isn’t a particular algorithm or technique. It’s a destination. LLM researchers, video researchers, and robotics researchers are all walking toward it from different directions.

Video generation gets you closer than language alone, because to make a believable video, a model has to know things about the physical world — for example, that a cat has four legs, not three.

Still, Xie doesn’t think pixels are the final answer. Pixels are a grid we built for human eyes — a simulator made for watching, not for predicting. The point of a world model is to predict the world, not to render a nice-looking video.

11. How to Train a World Model: Download Humanity

If the LLM era meant downloading the whole internet, then the world model era means downloading humanity.

That sounds like science fiction, because the data scale is terrifying. LeCun gave an example: a four-year-old child has seen roughly 50 times more visual data than the largest LLMs are trained on.

But starting from internet videos is a workable path, and Xie agrees. YouTube has huge amounts of data. The current issue is copyright and access. (The interviewer commented, “ByteDance has a real advantage,” and Xie agreed.)

Another interesting question: what is the product of a world model?

LLMs have chatbots—a huge success. Xie mentioned two directions: AI glasses (always-on real-world perception and smart decision-making) and robots (but the brain problem remains unsolved).

12. AI Companies Are in an Arms Race; AMI Labs Wants a “Grassroots Alliance”

On the endless hot topics and competition in AI, Xie noted that there is a huge value chain:

At the top are stories like AGI, scaling laws, LLMs. These stories define which benchmarks to compete on. Benchmarks decide where resources go. Resource allocation forces everyone onto the same track.

The result: everyone loses the ability to define the problem.

He gave an example: at Google, a researcher wanted to do representation learning work but was stopped after two weeks because the product cycle had to finish. It is not that people lack the desire or ability—it is that the value chain leaves no room.

What AMI Labs wants to do, Xie calls “reverse OpenAI.” Instead of taking shortcuts by downloading internet data, they work with real-world people who have specific problems and data to co‑build a world model. He compares it to how Mastercard once fought Visa: one small bank cannot beat Bank of America, but if many join forces to launch a credit card, they can compete. Hence a “grassroots alliance.”

13. LeCun’s Magnetism and the “Metaphysical” Side of Research

When talking about starting a company, Xie shared many details about working with Yann LeCun.

He admired LeCun’s many hobbies—astrophotography, building model aeroplanes, electronic music, sailing, watching films. LeCun came across as a deeply passionate and many‑sided person. Xie said that was part of why he wanted to work with him.

Beyond academics, LeCun also seems to have a personal pull for Xie. In Xie’s words, like Jobs and Musk, LeCun has a kind of “reality distortion field” that makes it easy to believe in what he is saying.

In a sense, Xie’s choice to work with LeCun reflects his broader view of world models: that they emerge through contact with the real world and through human relationships. The path is hard, but the problem is worth solving, and worth taking risks for.

This outlook shapes his research taste, his community, and even his career and startup decisions. He describes part of this as “metaphysical (玄学)”.

14. What Is True Intelligence?

Xie prefers not to define intelligence. Different animals have different kinds of it, and humans are just one kind.

He agrees with a point Rich Sutton has made: we treat LLMs that write code, win IMO gold medals, and help us reach Mars as impressive — but the truly hard thing is building a squirrel’s intelligence.

It is a deliberately non-anthropocentric view. If you can build a squirrel — give it its own goals, emotions, hunger, and ability to survive in the real world — then writing code or going to Mars becomes trivial by comparison.

The same shift applies to world models. Instead of asking when AGI will arrive, ask whether any robot today can do all the chores a 12-year-old can. In Xie’s view, that is the question every robotics company should be asking.

15. “I Am the Normal One”

Near the end of the interview, the interviewer asked Xie about something he had said: “I am not the chosen one, I am the normal one.”

Xie explained the line came from his favourite football coach, Jürgen Klopp. Klopp may dress like a punk and project confidence, but he still believes he is not the chosen one—just an ordinary person.

To me, this echoes Xie’s broader life philosophy. It is an attempt to step outside egocentrism and stay self‑aware. That may be why his research feels both grounded and otherworldly.

Appendix: Most influential AI papers mentioned by Xie in the interview

· Sutton, R. S. (1991). Dyna, an integrated architecture for learning, planning, and reacting. (Dyna)

· LeCun, Y., Bottou, L., Bengio, Y., & Haffner, P. (1998). Gradient-based learning applied to document recognition. (LeNet)

· Deng, J., Dong, W., Socher, R., Li, L.-J., Li, K., & Fei-Fei, L. (2009). ImageNet: A large-scale hierarchical image database. (ImageNet)

· Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). ImageNet classification with deep convolutional neural networks. (AlexNet)

· Goodfellow, I., et al. (2014). Generative adversarial nets. (GAN)

· Girshick, R., Donahue, J., Darrell, T., & Malik, J. (2014). Rich feature hierarchies for accurate object detection and semantic segmentation. (R-CNN)

· Ren, S., He, K., Girshick, R., & Sun, J. (2015). Faster R-CNN: Towards real-time object detection with region proposal networks. (Faster R-CNN)

· He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep residual learning for image recognition. (ResNet)

· Vaswani, A., et al. (2017). Attention is all you need. (Transformer)

· Devlin, J., Chang, M.-W., Lee, K., & Toutanova, K. (2019). BERT: Pre-training of deep bidirectional transformers for language understanding. (BERT)

· Ho, J., Jain, A., & Abbeel, P. (2020). Denoising diffusion probabilistic models. (DDPM)

· Brown, T. B., et al. (2020). Language models are few-shot learners. (GPT-3)

· Mildenhall, B., et al. (2020). NeRF: Representing scenes as neural radiance fields for view synthesis. (NeRF)

· Dosovitskiy, A., et al. (2021). An image is worth 16x16 words: Transformers for image recognition at scale. (ViT)

· Radford, A., et al. (2021). Learning transferable visual models from natural language supervision. (CLIP)

· Rombach, R., Blattmann, A., Lorenz, D., Esser, P., & Ommer, B. (2022). High-resolution image synthesis with latent diffusion models. (LDM)

· Kerbl, B., Kopanas, G., Leimkühler, T., & Drettakis, G. (2023). 3D Gaussian splatting for real-time radiance field rendering.